Confluent Kafka Docker Image

Ever heard of Kafka? It sounds a bit like a cool, independent coffee shop, right? Well, in the tech world, it's even cooler, acting as a super-efficient messenger between different parts of a system. Now, imagine trying to set up this messenger – it can be a bit complex. That's where the Confluent Kafka Docker Image comes in, making things incredibly easy. Learning about it isn’t just for software engineers; it’s about understanding how the data flows that power our modern digital lives, and that’s pretty relevant (and dare I say, a little fun!).

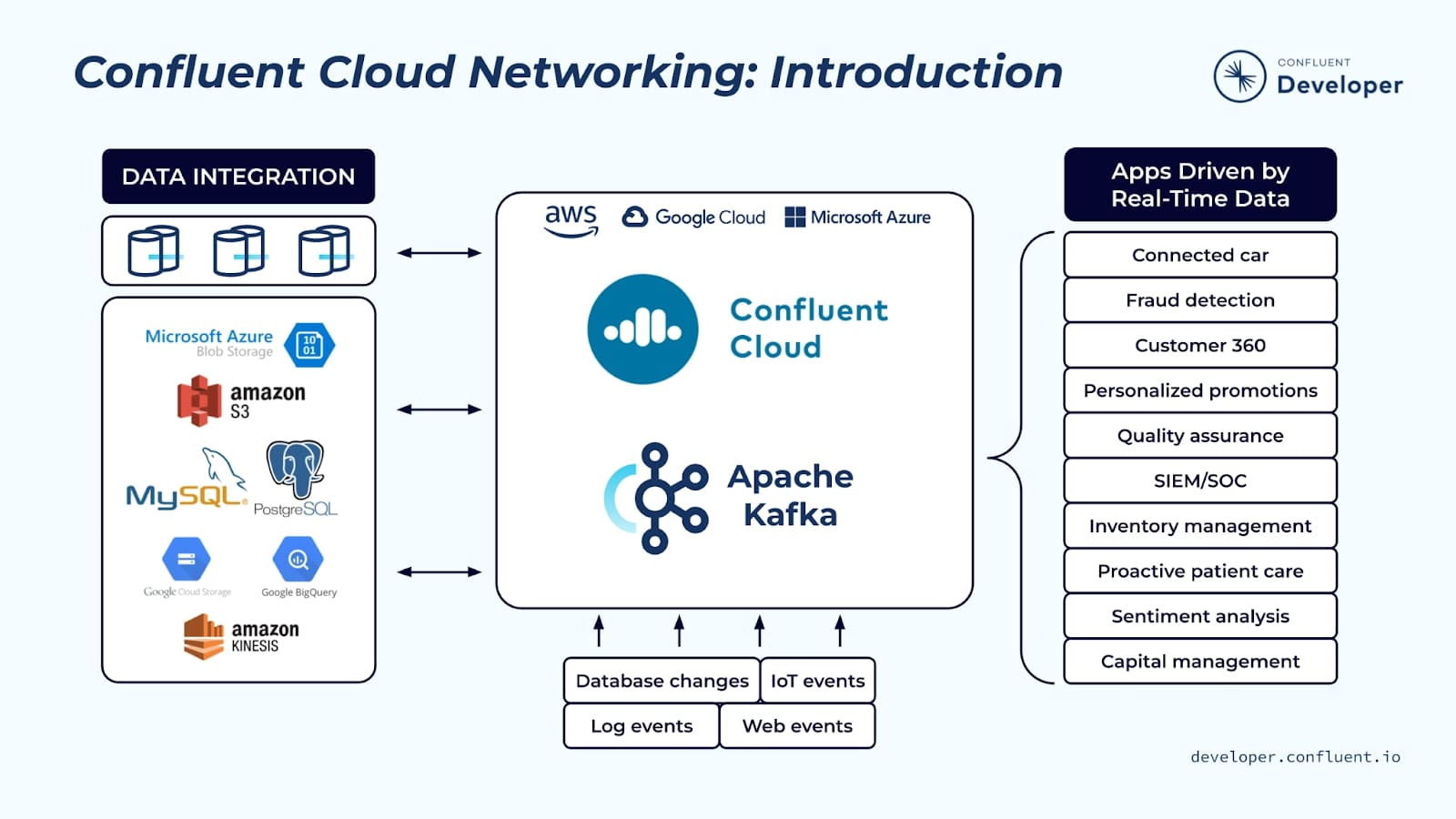

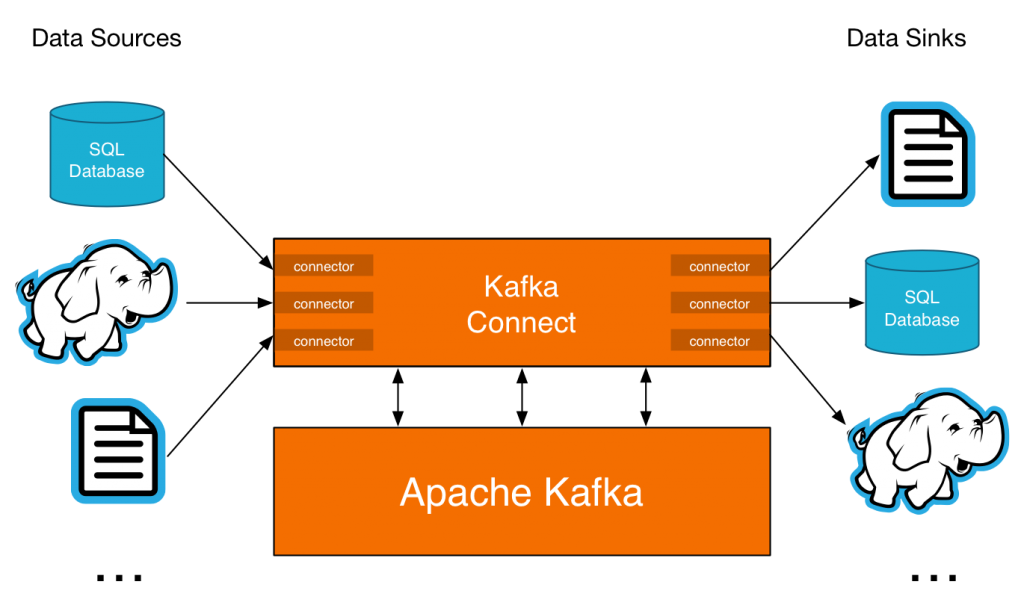

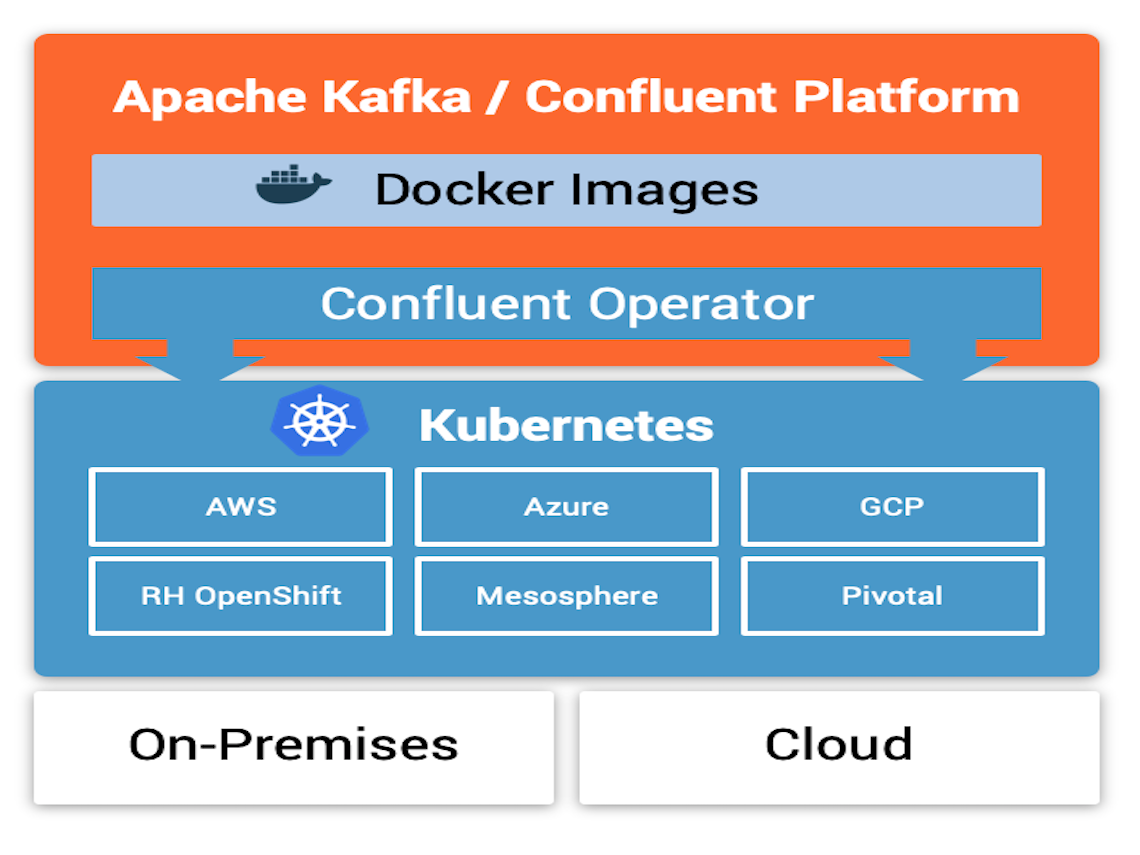

At its heart, the Confluent Kafka Docker Image is a pre-packaged version of the Apache Kafka platform, created and maintained by Confluent, the company founded by the original creators of Kafka. It includes all the necessary components – Kafka brokers, ZooKeeper (used for managing Kafka), and helpful tools – bundled together in a neat, self-contained package called a Docker image. This image can then be easily run in a Docker container. Think of it like a ready-made app that you can just launch on your computer, but instead of an app, it's a powerful data streaming platform.

The purpose is simple: to drastically simplify the setup and management of Kafka. The benefits are huge. First, consistency: everyone using the image gets the same environment, eliminating compatibility issues. Second, portability: you can run the same image on your laptop, a server, or in the cloud. Third, ease of use: no more wrestling with complex configurations. You can get a Kafka cluster up and running in minutes with a few simple commands.

Must Read

So, where might you encounter Kafka, even if you don't realize it? Imagine an online store. Every time you browse a product, add something to your cart, or place an order, these events are often streamed using Kafka. This allows the store to analyze your behavior in real-time, personalize your experience, and ensure your orders are processed efficiently. In education, researchers might use Kafka to collect and analyze data from sensors in a lab, or to stream student activity data in a learning management system. Even your fitness tracker likely uses a system similar to Kafka to handle the continuous stream of data from your wrist.

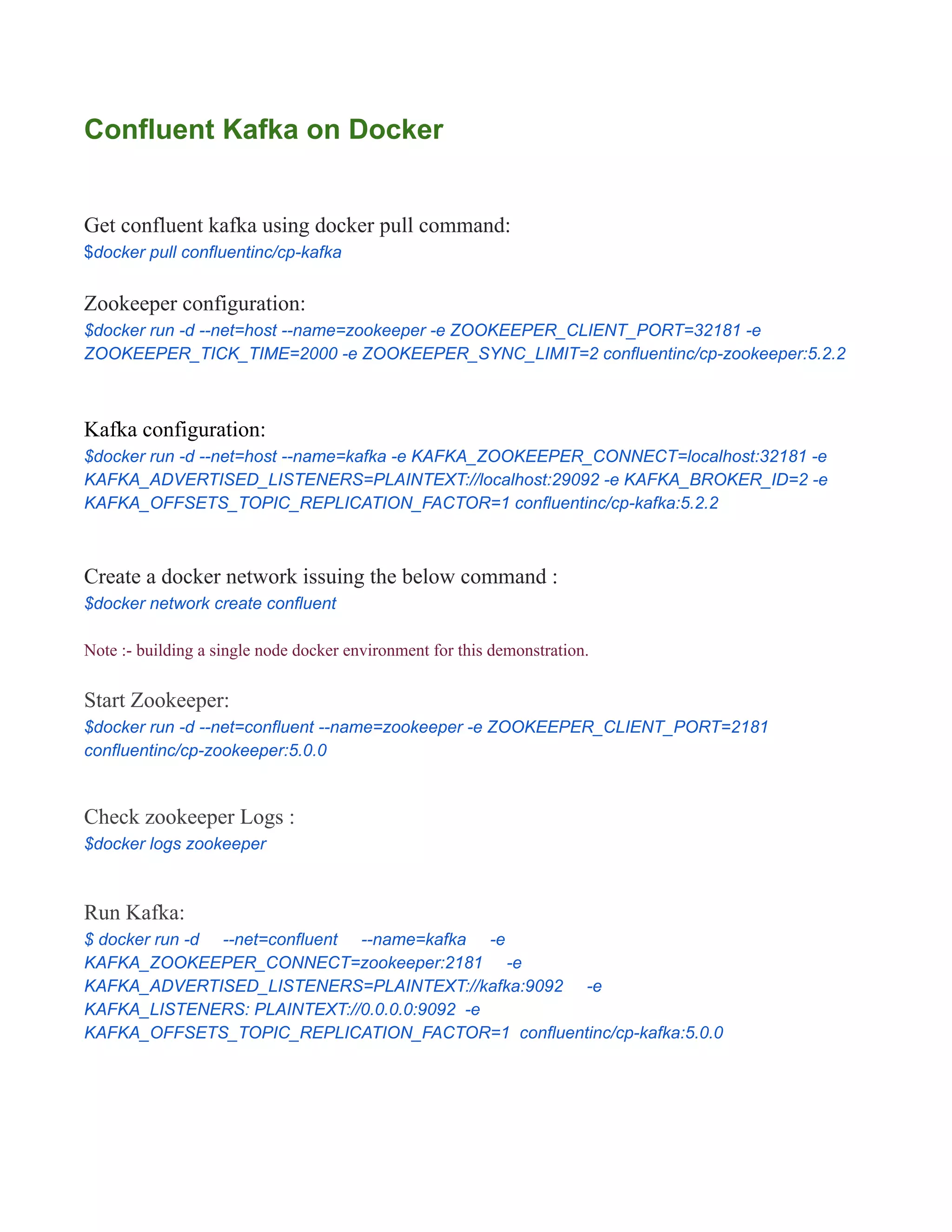

Ready to dip your toes in? A super simple way to explore is to install Docker Desktop (it’s free for personal use). Then, open a terminal or command prompt and run a command like `docker run -p 9092:9092 -p 2181:2181 confluentinc/cp-kafka:latest`. This command pulls the Confluent Kafka image and starts a Kafka broker. There are tons of tutorials online to help you send and receive messages (called "topics") using the Kafka command-line tools. Another excellent resource is the official Confluent documentation; they have incredibly detailed guides and examples. Don't be afraid to experiment! Try sending simple messages to a topic and then reading them back. Start small, and gradually explore the different features and configurations. You'll be amazed at how powerful and versatile this tool is. And remember, the Docker image keeps everything neatly contained, so you can easily start over if you mess something up!